A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

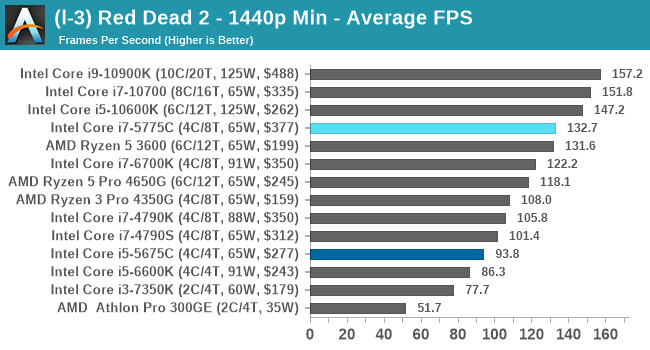

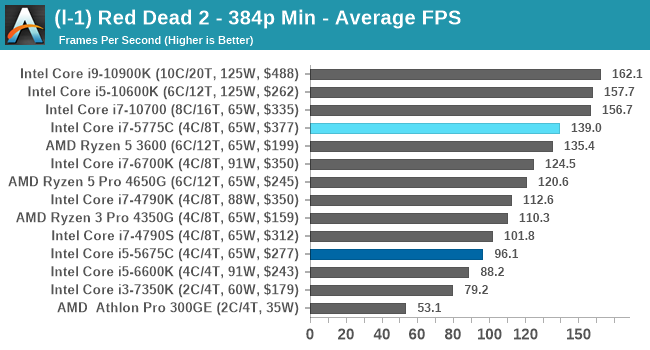

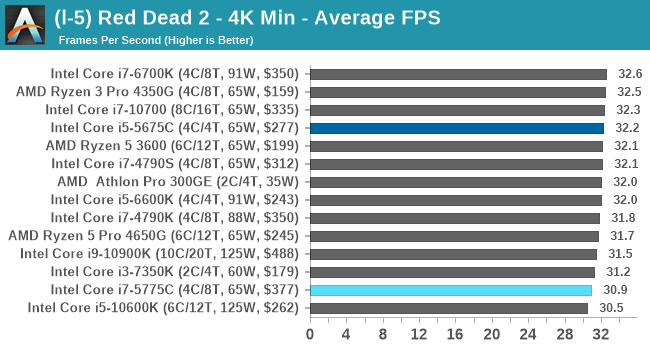

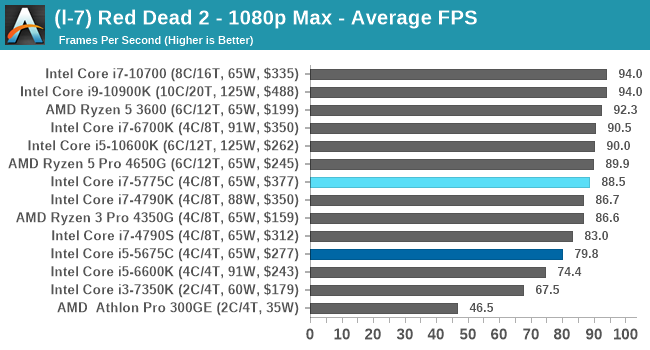

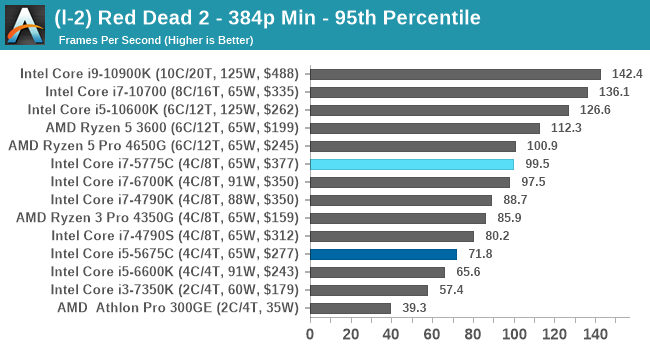

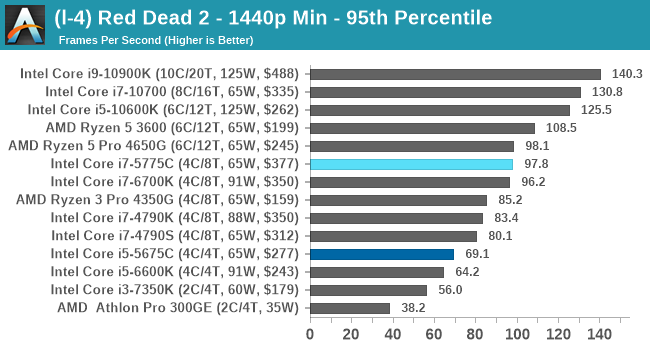

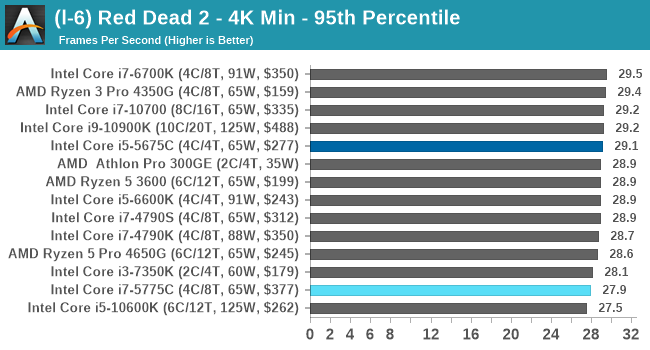

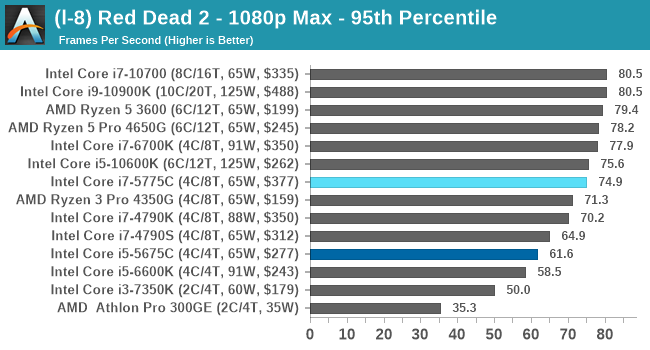

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTGaming Tests: Red Dead Redemption 2

It’s great to have another Rockstar benchmark in the mix, and the launch of Red Dead Redemption 2 (RDR2) on the PC gives us a chance to do that. Building on the success of the original RDR, the second incarnation came to Steam in December 2019 having been released on consoles first. The PC version takes the open-world cowboy genre into the start of the modern age, with a wide array of impressive graphics and features that are eerily close to reality.

For RDR2, Rockstar kept the same benchmark philosophy as with Grand Theft Auto V, with the benchmark consisting of several cut scenes with different weather and lighting effects, with a final scene focusing on an on-rails environment, only this time with mugging a shop leading to a shootout on horseback before riding over a bridge into the great unknown. Luckily most of the command line options from GTA V are present here, and the game also supports resolution scaling. We have the following tests:

- 384p Minimum, 1440p Minimum, 8K Minimum, 1080p Max

For that 8K setting, I originally thought I had the settings file at 4K and 1.0x scaling, but it was actually set at 2.0x giving that 8K. For the sake of it, I decided to keep the 8K settings.

For our results, we run through each resolution and setting configuration for a minimum of 10 minutes, before averaging and parsing the frame time data.

| AnandTech | Low Res Low Qual |

Medium Res Low Qual |

High Res Low Qual |

Medium Res Max Qual |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

All of our benchmark results can also be found in our benchmark engine, Bench.

120 Comments

View All Comments

realbabilu - Monday, November 2, 2020 - link

That Larger cache maybe need specified optimized BLAS.Kurosaki - Monday, November 2, 2020 - link

Did you mean BIAS?ballsystemlord - Tuesday, November 3, 2020 - link

BLAS == Basic Linear Algebra System.Kamen Rider Blade - Monday, November 2, 2020 - link

I think there is merit to having Off-Die L4 cache.Imagine the low latency and high bandwidth you can get with shoving some stacks of HBM2 or DDR-5, whichever is more affordable and can better use the bandwidth over whatever link you're providing.

nandnandnand - Monday, November 2, 2020 - link

I'm assuming that Zen 4 will add at least 2-4 GB of L4 cache stacked on the I/O die.ichaya - Monday, November 2, 2020 - link

Waiting for this to happen... have been since TR1.nandnandnand - Monday, November 2, 2020 - link

Throw in an RDNA 3 chiplet (in Ryzen 6950X/6900X/whatever) for iGPU and machine learning, and things will get really interesting.ichaya - Monday, November 2, 2020 - link

Yep.dotjaz - Saturday, November 7, 2020 - link

That's definitely not happening. You are delusional if you think RDNA3 will appear as iGPU first.At best we can hope the next I/O die to intergrate full VCN/DCN with a few RDNA2 CUs.

dotjaz - Saturday, November 7, 2020 - link

Also doubly delusional if think think RDNA3 is any good for ML. CDNA2 is designed for that.Adding powerful iGPU to Ryzen 9 servers literally no purpose. Nobody will be satisfied with that tiny performance. Guaranteed recipe for instant failure.

The only iGPU that would make sense is a mini iGPU in I/O die for desktop/video decoding OR iGPU coupled with low end CPU for an complete entry level gaming SOC aka APU. Chiplet design almost makes no sense for APU as long as GloFo is in play.